You Can't 'Write Off' AI QA Tax at the Read/Write Layer, You Can Only Move When The Bill Comes Due

Palanivel Rajan Mylsamy, Director of Engineering Program Management at Cisco, on why the QA tax on AI-generated code can't be written off, only paid earlier in the pipeline before it comes due at the database.

Architecture is the key. Ask the right things at right place to make sure keep iterating. It's not just what the AI is telling you to do; you need to direct what you want from it.

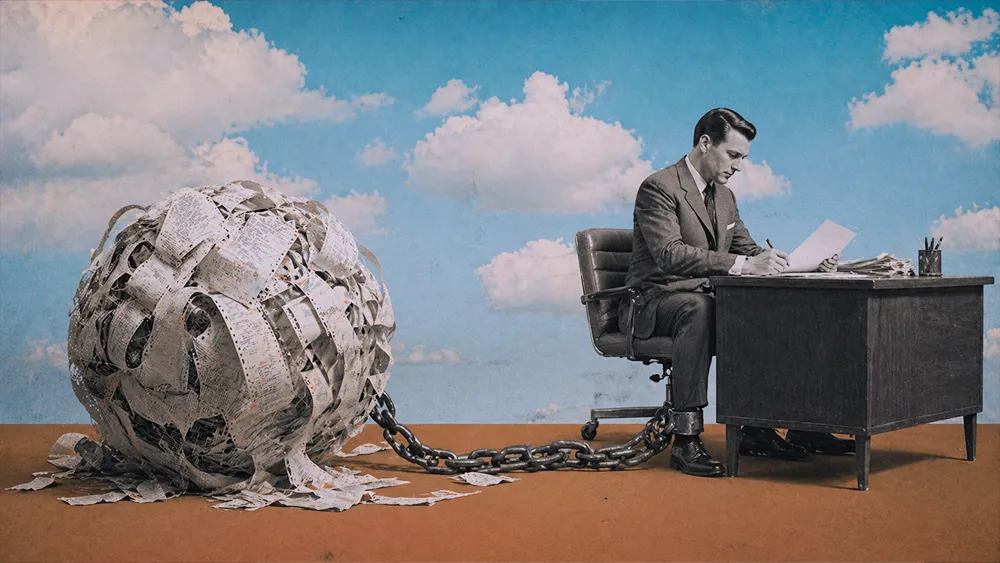

Adoption is no longer the question. 80% of developers now use AI tools in their workflows, but trust in their accuracy has fallen from 40% to 29% year over year, per Stack Overflow's 2025 Developer Survey. The same survey found that 66% of developers spend more time fixing AI-generated code that is "almost right, but not quite" than they save by accepting it in the first place. That gap, the cost of validating output that looks correct but isn't, is what engineers increasingly call the QA tax. And it is becoming the central question of 2026 production.

The timing matters because 2025 was the year of experiments, but 2026 is the year those experiments have to ship, said Palanivel Rajan Mylsamy in a recent interview with The Read Replica.

Mylsamy is Director of Engineering Program Management at Cisco, where he runs program operations across an engineering business unit. He spent more than a decade at HCL Technologies in roles ranging from technical lead to senior project manager before joining Cisco in 2019. Eighteen-plus years in, his daily workflow now runs on top of an AI chief-of-staff agent he built himself in Codex. He calls it Atlas. Atlas reads his Outlook inbox, his WebEx, his Slack, his Jira, and gives him a five-minute morning brief, work that used to take him two to three hours of manual screening across tools. Most of what he says about how AI should be governed in production is grounded in the fact that he is running it himself.

Making AI code earn its keep

For the kind of work that touches customers, Mylsamy describes a four-pillar gauntlet. Guardrails with a human in the loop. A tightened Definition of Done and Definition of Readiness. A security wrapper that scans for hardcoded tokens and exposed credentials. And subject-matter expert review that catches what automated checks miss. The basic principle is that AI-generated code should clear higher gates than human-written code, not lower ones.

"Now we wanted to set the bar very high with the definition of 'done' and the definition of 'readiness' so that we are making it tougher for the AIs to pass through the gate," Mylsamy said. The point of raising the bar is not to slow AI down. It is to make sure that what reaches production has earned its way there. "We are making it tougher for the AIs to pass through the gate, of course it will beat its expectation," he said. "We can do more with less."

The enterprise context matters. Cisco launched AI Defense in January 2025 as a comprehensive AI security solution and has expanded it substantially through 2026, adding agent runtime SDKs and AI-aware SASE. Mylsamy frames his approach as continuous with that posture. "Cisco has a big security function and a bigger role with enterprise customer," he said. "We have to be even more responsible in using the AI."

Token budgets as a forcing function

Once AI is generating code at scale, the metrics that used to indicate progress no longer mean what they used to. "Any metrics that we have been using to measure in the past are not relevant," Mylsamy said. He uses lines of code as the example. "With AI, you can generate however many lines of code you want. Like going from room A to room B, you can go from room A to some other room in another building and come to room B."

The point is that the output reaches the destination either way. The path matters more than the volume, but only an expert in the room can tell which path was taken. The SPACE framework, published in ACM Queue in 2021 by researchers from GitHub, Microsoft, and the University of Victoria, made this argument well before AI code generation became routine: developer productivity cannot be reduced to a single output metric, and lines of code in particular distorts incentives. AI made the warning urgent.

What replaces LOC is not another activity metric. It is cost. And here Mylsamy is candid about a gap in his own controls. "We still haven't put that token usage or the cost limitation cap to anything that we are building," he said. "Once I'm given a cap, say 3 to 4 million tokens a week, then I know the cost I need to work within. It's like living with your budget." He acknowledges those caps would force prioritization, making him slow down on the work that does not really need AI in the first place.

The industry is moving toward that reset. IDC's FutureScape 2026 projects that by 2027, G1000 organizations will face up to a 30% rise in underestimated AI infrastructure costs, and the FinOps Foundation has launched a dedicated FinOps for AI certification track to address it. The shift from "did the code work" to "did we burn the budget getting there" is the metric reset Mylsamy is pointing at, even if his own team has not fully implemented it yet.

AI will build exactly what you ask for, OAuth included (or not)

The clearest demonstration of his thesis is a story about an integration he was building. Mylsamy wanted Codex to wire up an integration with WebEx. Codex did exactly what he asked. Then he hit the limitation: the access token expired every 12 hours. He went back, read up on the available options, and found that OAuth authentication could solve the expiry problem. Then he prompted again with that constraint in the spec. Plan B worked.

"You asked for A and it delivered A," Mylsamy said. "Then with that A, you have some limitations you want to address through a wrapper or a container. So I found a plan B."

The point his story makes about AI-generated code is consistent with what empirical research is now documenting. A 2025 study on LLM prompt underspecification found that LLMs fill in unspecified prompt requirements only 41.1% of the time, with behavior that varies inconsistently across model versions and sometimes degrades by more than 20% on a routine update. The trust gap does not come from AI being wrong. It comes from AI being faithful to a request that wasn't fully informed. "It's not the fact that AI is generating," Mylsamy said. "It's that you're not prompting the AI with the right things."

Architecture is where the QA tax has to move

If the gap is in the ask, then the controls that matter most are the ones that constrain what can be asked in the first place. Mylsamy's closing thread is that the answer is architecture. "Architecture is the key," he said. "Ask the right things at right place to make sure keep iterating. It all starts from you. It's not just what the AI is telling you to do; you need to direct what you want from it."

That is where the QA tax has to move if Cisco-scale review capacity is not an option. The pre-execution gates Mylsamy describes work for an enterprise that can afford layered review processes. For the rest, the controls have to be earlier and lower in the stack: at the architecture, the schema, the access scope. Managed Postgres platforms have started shipping OAuth and identity primitives at the data layer for exactly this reason. Supabase's OAuth 2.1 server, launched in late 2025, is built to authenticate AI agents and MCP servers as existing users, with row-level security policies enforced automatically against the client_id claim. The bet is that infrastructure-level constraints catch what pre-execution code reviews cannot.

The QA tax does not get written off; it gets moved. Either it gets paid early, in the architecture, the prompt, and the Definition of Done, or it gets paid late, at the read/write layer where the customer is. Mylsamy's bet is that paying early is always cheaper.